Integrating legacy systems with newer technologies while the system grows

The most dangerous systems in an organization are often the ones no one complains about. They sit there, processing transactions or handling core data, running without throwing critical errors. But these legacy systems end up holding back everything you try to build next. A new feature is limited because the old authentication model can’t support it. A scaling initiative stalls because a ten-year-old database schema has become the bottleneck.

That’s when “don’t touch it, it works” turns into a real problem. The system doesn’t break in an obvious way, but it starts slowing down growth. The cost shows up in missed opportunities and in the increasingly complex workarounds the team has to build. You end up creating services whose main job is to adapt data into a format the old system accepts, which adds latency and yet another point of failure.

When “don’t touch it” becomes a real problem at scale

The pressure starts showing up in unexpected places. Product managers stop asking for certain features because they already know it will involve touching the “core” and turning into a six-month project. Engineers spend more and more time writing code full of validations and edge-case handling to deal with the quirks of the legacy system and inconsistent data. The operational cost goes beyond servers. It also includes the mental load on each developer who has to keep in their head how the legacy system actually behaves, even when that isn’t documented.

Why a full rewrite is almost never the answer

When the problems become more visible, the first instinct is usually the most radical one: rewrite everything from scratch. Throw away the old system and build a new one with more modern tools and practices. On paper, it sounds simple and straightforward. In practice, it’s a risky bet that almost never delivers as expected.

The project almost always takes two or three times longer than estimated. While that massive effort is underway, the business doesn’t stop. The market changes, new competitors show up, and the product still needs to evolve. The team rebuilding the system ends up chasing a moving target, constantly reprioritizing and falling further behind. Meanwhile, the legacy system still needs maintenance and fixes, splitting focus and resources.

Keeping the business running while dealing with legacy systems

A full rewrite is as much an organizational project as it is a technical one. A large part of the cost comes from losing the business knowledge embedded in the code over the years. Fixes, edge-case handling, and customer-specific rules are all there. Almost none of it is well documented, and the people who wrote it are often no longer at the company. The new team ends up spending years rediscovering that behavior, and it’s not uncommon to recreate old bugs before fixing them again.

Freezing product development for two years is a risk most companies can’t afford. You stop delivering value while your competitors keep moving.

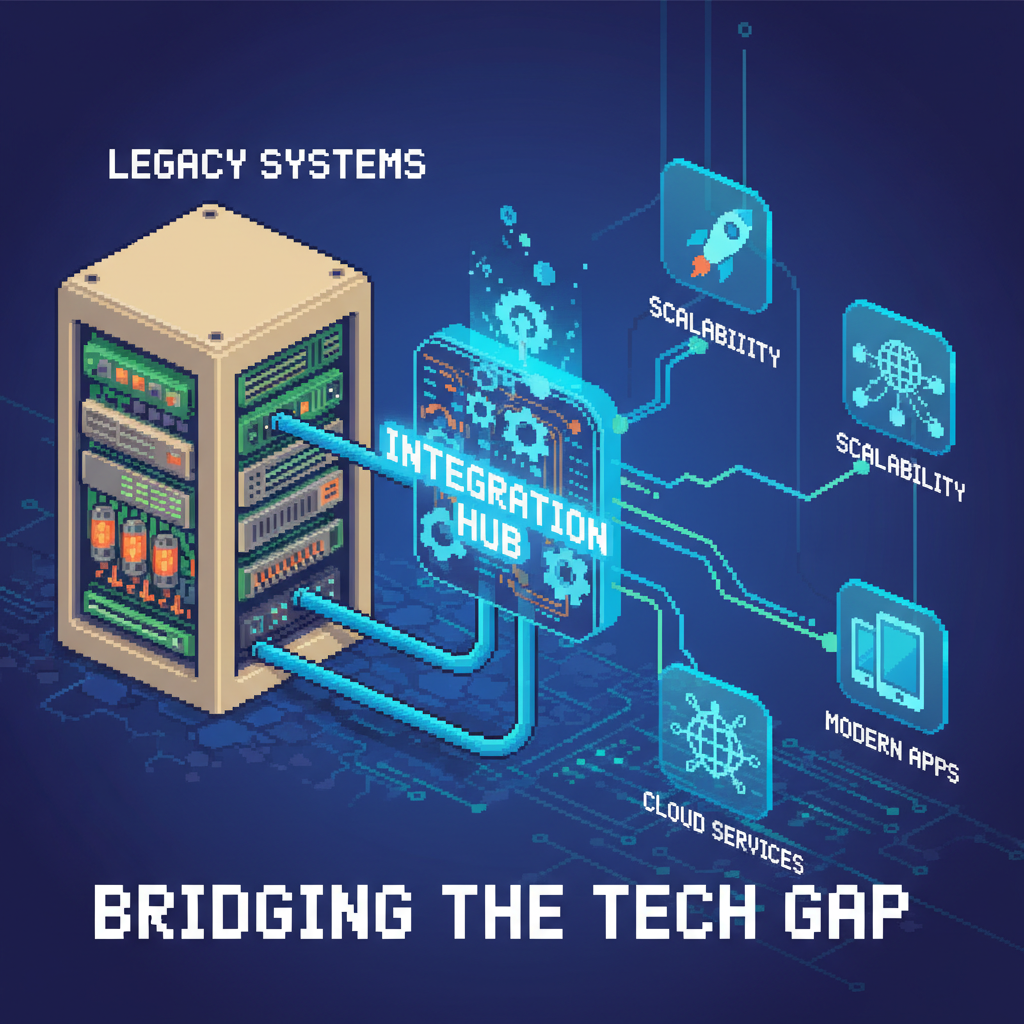

A better path: build a new layer around the old core

A more pragmatic approach is to treat the legacy system as a stable, even if imperfect, core and build a new layer around it. This lets you introduce new technologies and architectures gradually, without interrupting the flow of value to the business. The goal is to progressively reduce the responsibilities of the legacy system until it becomes irrelevant.

Applying the Strangler Fig pattern to legacy systems

This approach is known as the Strangler Fig Pattern. You identify a specific capability inside the monolith, build it as a separate new service, and redirect calls to this new service instead of the old one. Over time, you repeat the process, extracting more parts until the original monolith disappears or becomes something small and manageable.

The process is straightforward, but it requires discipline. First, choose a well-defined business capability to update. Don’t start with the most complex part or the most trivial one. Pick something that delivers quick value or unblocks other teams. Then build a new service or an API layer to encapsulate that functionality. This new service becomes the source of truth for that domain. At first, it may still fetch data from the legacy system, but its interface is clean and modern from day one. Finally, gradually move traffic and new features to this service. You can use proxies, API gateways, or feature flags to route a small portion of traffic and monitor it closely. As confidence grows, increase the traffic and start building everything new there. This method reduces risk. If something goes wrong, you can quickly fall back to the legacy system. You keep shipping features and get feedback much earlier.

Deciding where to start connecting systems

The hardest part of this strategy is choosing where to start. A bad choice can stall the entire effort. The decision needs to consider real business and technical problems, not just technical preference. It’s worth looking at where the legacy system is causing the most day-to-day impact.

Choosing the right legacy components to tackle

Here are some criteria to find a good starting point:

- High-traffic areas that directly affect user experience. Is there a slow endpoint being called on every front-end request? Wrapping it in a new service with caching can deliver a quick, visible gain.

- Parts that block new features. Is the product team stuck because the billing module is inflexible? Extracting it into a new service can unlock direct business growth.

- Problematic or inconsistent databases. Is there a massive table no one wants to touch? Creating a new service with its own storage and syncing via events can isolate that critical point.

- Areas with the highest operational cost or risk. Which part generates the most incidents? Isolating it into a well-monitored independent service can significantly improve stability.

Next steps to connect the new with the old

After choosing the starting point, the next challenge is integrating without carrying over the legacy system’s problems. The goal is to keep the new system isolated from that complexity.

- Use API gateways to control access and transform data. A gateway can route traffic to the new or old service based on headers, routes, or users. It can also act as a protection layer, translating legacy structures into more modern formats.

- Use events to synchronize data. Avoid tight coupling to the legacy system through synchronous calls. That just creates a distributed monolith. Prefer publishing events when data changes and let new services consume those events to keep local copies up to date.

- Write solid tests at integration points. Tests that validate communication between services are essential to ensure everything keeps working as expected. They should run on every deploy.

- Define clear ownership. The team responsible for the new service also owns the integration with the legacy system. Without that, these connections tend to degrade over time.