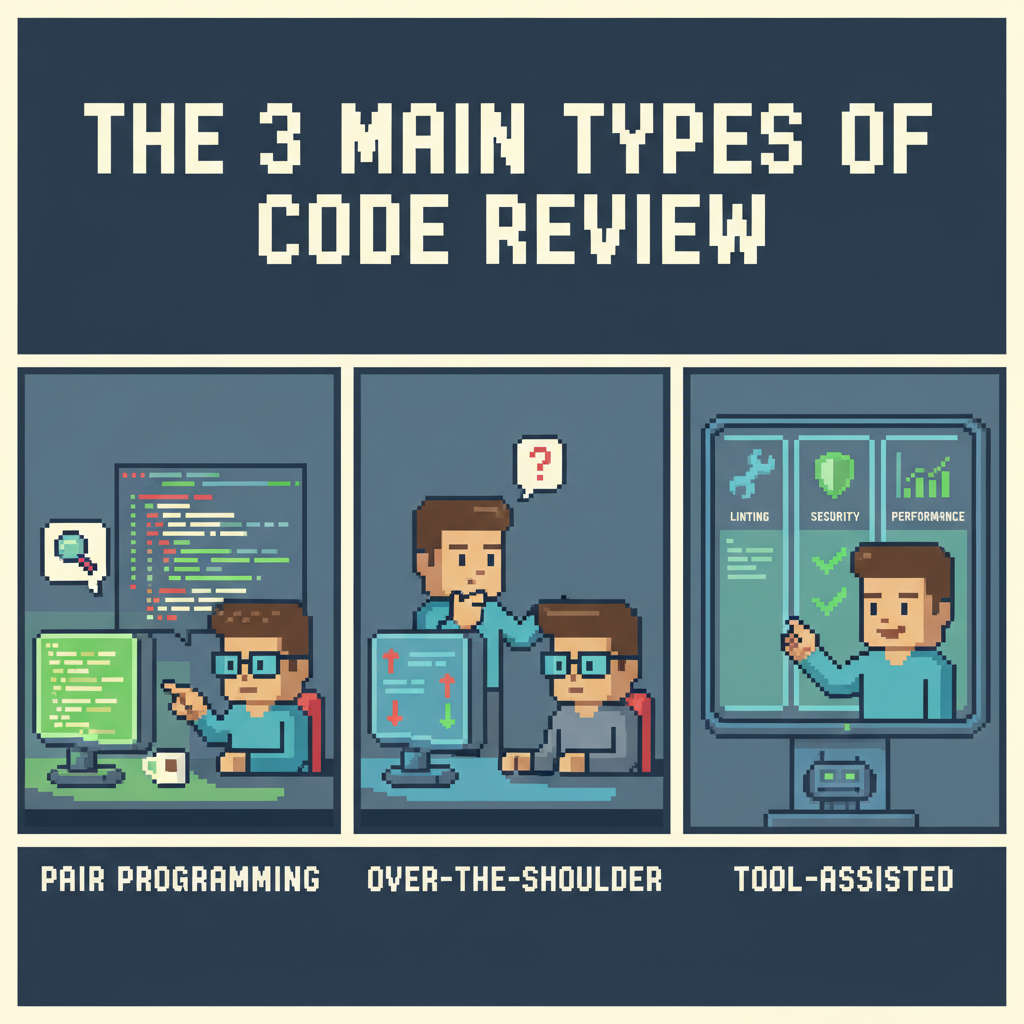

The 3 main types of code review

Everyone has seen that pull request that just doesn’t move. It stays open for days, collecting comment after comment. Someone points out a comma in one comment, someone else suggests renaming a variable. Meanwhile, the most important change, the one that introduces problematic coupling between parts of the system, goes unnoticed and only shows up when it breaks in staging. The big problems slip through, and the process gets slow and full of noise.

This happens when the PR comment thread tries to solve everything at once. We end up mixing different types of code review without realizing it. The person who wrote the code doesn’t know what kind of feedback to ask for, and the reviewer doesn’t know what kind of feedback to give. In the end, it turns into a misaligned process, focused on small details while things that actually impact the system slip through.

Why most code review processes don’t work well

This back and forth comes from a lack of clarity. We expect a single code review to cover everything: find bugs, check standards, serve as learning, and still discuss architecture. The reviewer doesn’t really know what their role is there. Is it to catch every bug, enforce team conventions, or question the approach?

When everything happens in the same thread, the conversation gets lost. A formatting comment gets mixed with a question about long-term maintainability. This constant context switching takes time. The person who wrote the code starts getting annoyed by small details, and the reviewer gets frustrated when the important points get buried among a bunch of minor comments. We need to separate these things.

Breaking code review into parts

Instead of treating every review as a single thing, you can split it by objective. And put each type of work in the right place, whether that’s a person or automation. That way, there’s more time to focus on what actually requires analysis and context.

1. Style review

This is the most basic level of review. It checks team standards, formatting, variable names, and things like that. These are the comments that show up the most and add the least value.

This entire category should be automated. Some tools, including those powered by AI, go further and apply team conventions directly in the PR. An agent can check things like:

- Correct formatting and indentation.

- Variable names following defined standards.

- Adherence to the project’s style guide.

- Unused imports or variables.

By the time someone looks at the PR, all of this should already be handled. Zero human comments about whitespace or brace placement. That alone removes a huge distraction.

2. Performance review

Here the goal is to make sure the code does what it’s supposed to do, without introducing bugs, security issues, or slowness. It’s a hybrid effort: automation handles the first pass, and a person does the final validation.

Automation can identify patterns that usually signal problems:

- N+1 patterns in database access.

- Code paths that can lead to infinite loops.

- Use of deprecated functions or insecure libraries.

- Performance regressions compared to a baseline.

AI tools can flag these kinds of patterns, but they don’t have all the context to know if something is actually a problem. An N+1 might be acceptable in a small internal tool. That’s where the reviewer comes in. The tool surfaces potential risks and reduces the effort of finding everything manually. The person decides what makes sense in that context. Instead of searching for problems across the whole diff, they start from a shorter list of what could go wrong.

3. Domain review

This is the most valuable part of the review. It requires understanding the business, the architecture, and where the product is going. This is where the important questions come in:

- Does this change correctly implement the business logic?

- Does this architecture make future changes easier or harder?

- Is the code readable for someone new to this part of the system?

- Is this aligned with where the team wants to take the product in the coming months?

It’s also the main moment for knowledge sharing. A more experienced engineer can teach a new pattern. Someone more junior can gain context about a part of the system they don’t know yet.

AI can help by bringing context, like highlighting parts of the code that might be impacted. But the final decision is still human. No agent can tell whether a new abstraction makes sense for the team’s level or the future of the product. This is where engineering time makes the biggest difference.

How to choose the right type of code review

The person opening the PR needs to make it clear from the start what the goal of the review is.

Setting clear expectations

When opening a PR, say what you expect. A simple paragraph in the description already helps. You can ask direct questions. For example: “This is a simple bug fix. I want to validate that I didn’t miss any edge cases.” Or: “This is a first draft of a feature. I want feedback on the design and data model before refining the implementation.” Or even: “I’m refactoring the authentication service. I want to know if the new interface simplifies usage for other services.”

For the reviewer, this context helps with focus. If the request is design feedback, it doesn’t make sense to spend time discussing variable names. If the goal is to validate correctness, it’s not the moment to suggest switching frameworks.

Aligning the review with the type of change

A good starting point is to align the type of review with the type of code. Bug fixes or urgent patches usually call for performance review only. The scope is small, and the goal is to validate the fix without introducing new issues. When someone new is working on a more complex part of the system, a domain-focused review and knowledge sharing make more sense. The goal is not just the code, but building shared context. Larger architectural changes or new services require domain and design review. And that conversation should happen outside the PR, in a document, meeting, or RFC, before writing code. The PR review then becomes just a check that the implementation follows what was already decided.

How to organize your code review process

Making this change requires a few adjustments in the team’s workflow.

First, start the PR with a clear objective. A PR template helps enforce that.

Then, automate the first layer. Configure CI to run style and formatting checks before involving anyone. Use static analysis and AI tools to surface common performance issues.

You can also separate design discussions from code review. When a change has a big architectural impact, the PR thread is not the right place. Discuss it in a document, meeting, or RFC. The PR becomes just the implementation of something already aligned.

By separating these layers, the process becomes faster and less draining. The machine handles what is repetitive and objective. People focus on the decisions that actually matter for the future of the code.