AI in SDLC: How Engineering Teams Are Redesigning Their Workflow

The current conversation about the future of engineering with AI usually revolves around developer productivity, typically measured by how fast we can generate code. But this focus misses the real bottleneck. The problem is not just the speed of writing code; it is the growing weight of everything that happens around it. Our current SDLC models were designed for a human pace of change, and they are starting to buckle under the weight of increasingly complex systems and relentless pressure to ship faster.

You see the symptoms every day. Pull requests sit in review for days because they touch five different microservices. Flaky integration tests get quarantined instead of fixed. Onboarding a new engineer onto a project takes weeks because the system’s cognitive load is simply too high. We are reaching the limits of what manual processes and individual human oversight can handle. Simply making one part of this system, code generation, faster does not solve the architectural and process constraints at the root of the problem. It only creates a bigger backlog at the next bottleneck.

AI in the Day-to-Day of the Engineering Team

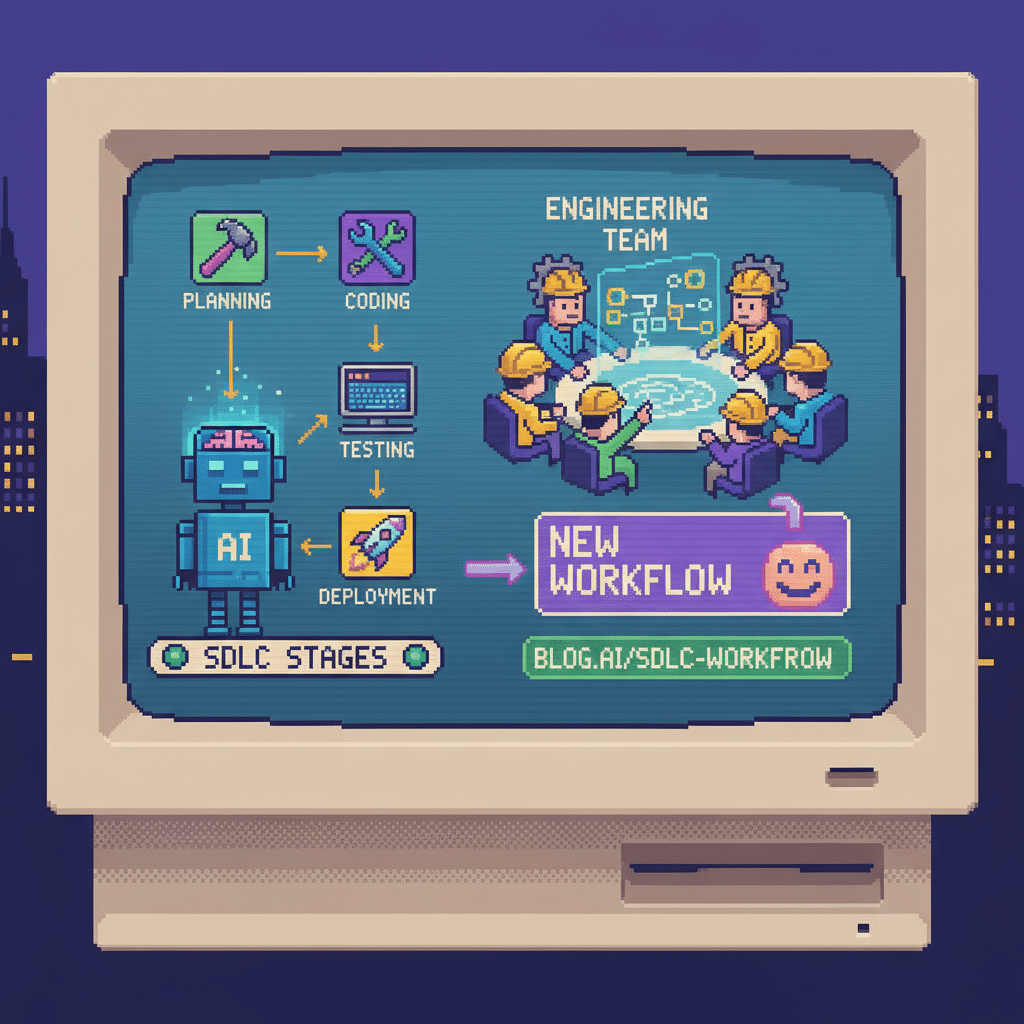

Instead of using AI only to speed up processes that are already broken, it is worth thinking about how it can change the workflow from the ground up. It is not just about plugging an assistant into the IDE and assuming the problem is solved. It is about rethinking the entire path from idea to production, understanding that AI operates at a different scale and rhythm than a human engineer.

The idea is to move away from a workflow full of manual steps and toward something more integrated and automated, where engineers focus more on decisions, review, and technical direction, and less on writing everything line by line. This makes it possible to handle a level of complexity and speed that current processes simply cannot keep up with.

The Impact of AI Across the SDLC

When you look at the SDLC from this perspective, the opportunities for change go far beyond just writing code faster.

From Generating Code to Building with Agents

We are moving beyond simply completing code in a file. The next step is working with agents: AI that receives a high-level specification, breaks the problem into tasks, writes the code, creates tests, and even opens the PR.

In this model, the engineer’s role changes. Instead of focusing on typing implementations, they focus on clearly defining the problem and reviewing the solution. The bottleneck shifts from writing code to writing good specifications. If the specification is ambiguous, the agent will produce a technically correct but functionally useless result, creating a new kind of rework.

Detecting Problems Before They Reach Production

Our current approach to testing is largely reactive. We write tests to catch regressions from bugs we have already seen. AI enables a more predictive model. By analyzing code changes, historical bug data, and runtime telemetry, AI can predict which changes are more likely to introduce failures and automatically generate targeted tests for these high-risk areas. It can also identify gaps in test coverage that are not obvious from a simple coverage percentage, such as missing edge cases or untested interactions between services.

Code Review Going Beyond the Basics

Code review is often a bottleneck, and much of the time is spent on superficial checks, such as style, conventions, or hunting for obvious bugs. AI can automate most of this, freeing human reviewers to focus on what truly requires deep context: architectural consistency, long-term maintainability, and business logic correctness. An AI-augmented code review process can identify potential performance regressions, security vulnerabilities, or deviations from established standards that a person might miss in a large PR. The goal is to make human review more meaningful, not to replace it.

Automating Operations and Incident Management

In production, AI can correlate alerts, analyze logs, and identify the likely root cause of an incident much faster than a human. This reduces mean time to resolution (MTTR). It can also automate the creation of post-mortems, pulling together data from monitoring tools, communication channels, and deployment histories to create a first draft. This ensures that incident context is not lost and helps prevent the same type of problem from happening again.

How Engineering Changes in the AI Era

This shift in workflows inevitably changes the structure of the engineering organization and the roles within it.

How the Engineer’s Role Is Changing with AI

As AI takes over more low-level implementation work, the senior engineer’s role becomes less about writing code and more about orchestrating systems. The most valuable skills will be problem decomposition, system design, and critical evaluation of AI-generated solutions. An engineer who can take a vague business problem, translate it into a precise technical specification for an AI agent, and then rigorously validate the result is far more valuable than someone who just codes fast. This requires a strong ability to think abstractly and keep the entire system architecture in mind.

New Skill Sets and Specialized Roles

We will likely see the emergence of more specialized roles. For example, a “Prompt Engineer” or “AI Interaction Designer,” focused on creating specifications for AI agents, may become a standard role on a team. We may also see “AI System Trainers,” responsible for tuning models based on the company’s specific code and architectural patterns to improve the quality of generated code. The classic “full-stack developer” role may become less common, as deep specialization in AI orchestration becomes more critical.

The New Challenges of Integrating AI

AI helps, but it also brings new problems. And many of them go beyond the technical side.

Overdependence on AI in Daily Work

There is a real risk that, as engineers rely more on AI for implementation, their own programming skills will deteriorate. This is especially dangerous for more junior engineers, who may not have the same opportunities to learn by doing. If a team becomes fully dependent on AI to write and fix its code, it loses the ability to solve new problems or debug complex issues when AI fails. The knowledge of why the system was built a certain way is lost, even if the code itself remains.

Managing AI-Generated Technical Debt

AI agents are very good at producing code that works for the specific case described in the prompt. They are not as good at considering long-term maintainability, architectural consistency, or the patterns a team has built over time. This can lead to a new and insidious form of technical debt. The code may be clean and well documented, but wrong in how it solves the problem, creating more maintenance headaches in the future. It is the kind of code that passes all tests but gradually makes the system harder to change.

Ethical Governance and Compliance in AI

With AI writing code, reviewing PRs, and even helping with infrastructure, accountability becomes a serious issue. Who is responsible when an AI introduces a security flaw or causes a data leak? What are the limits on using proprietary code in model training? How do you ensure compliance with regulations like GDPR when AI handles sensitive data?

These questions do not have easy answers and require explicit policies and technical safeguards.

How to Adopt AI in Practice

Designing a New Workflow

- Treat AI adoption as an organizational redesign, not just a tool rollout. This requires changing how teams are structured, what is measured, and which skills are sought in hiring.

- Use AI to automate what can be automated, but keep important decisions with people. Automate code generation and testing, but keep people responsible for architectural decisions, final merge approvals, and incident command.

- Invest in training engineers to work with AI. This means training them in prompt engineering, system-level thinking, and critical evaluation of AI output.

- Implement multi-dimensional metrics. Do not track only lines of code or story points. Measure things like the time a PR takes from creation to merge, the proportion of AI-generated code that is modified before merge, and the frequency of production incidents caused by AI-generated changes.

- Define clear rules for AI usage. Establish what AI can and cannot do. For example, code involving authentication or payments may require review by two senior engineers. Make it clear where AI responsibility ends and human responsibility begins.

Building a Team That Adapts Quickly

In the end, none of this works without a team willing to test, fail, and learn. There must be room to experiment with new AI-driven workflows, even if they do not work perfectly at first. It is also important to speak openly about risks, such as skill loss, and adjust the process when necessary. The teams that succeed will be those that see AI not as a replacement for engineers, but as a new, powerful, and complex tool that requires skill and discipline to be used effectively.